Classic CNN Architectures

Why Architecture History Matters

Once the core CNN operations are clear, it becomes easier to read architectures as design choices rather than as a list of names. Two models from your lecture are especially important:

- LeNet, which showed that convolutional networks could work in practice for recognition tasks,

- AlexNet, which pushed deep CNNs into the center of modern computer vision.

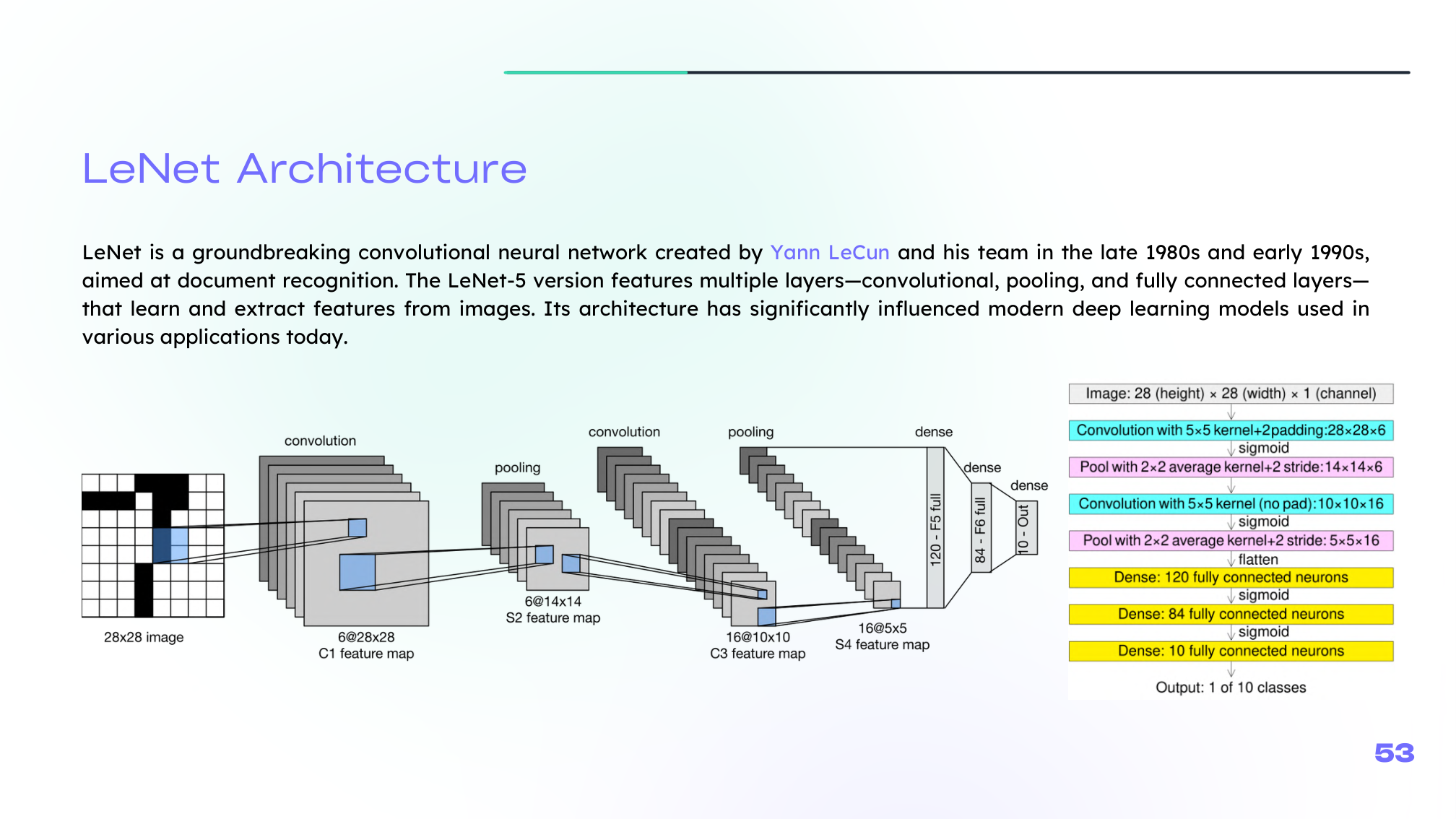

LeNet

LeNet, developed by Yann LeCun and collaborators, is one of the foundational CNN architectures. It combines:

- convolutional layers,

- pooling layers,

- and fully connected layers.

Its importance is not just historical. It established the basic architectural rhythm that still appears in many later CNNs: extract local features, compress spatially, then classify.

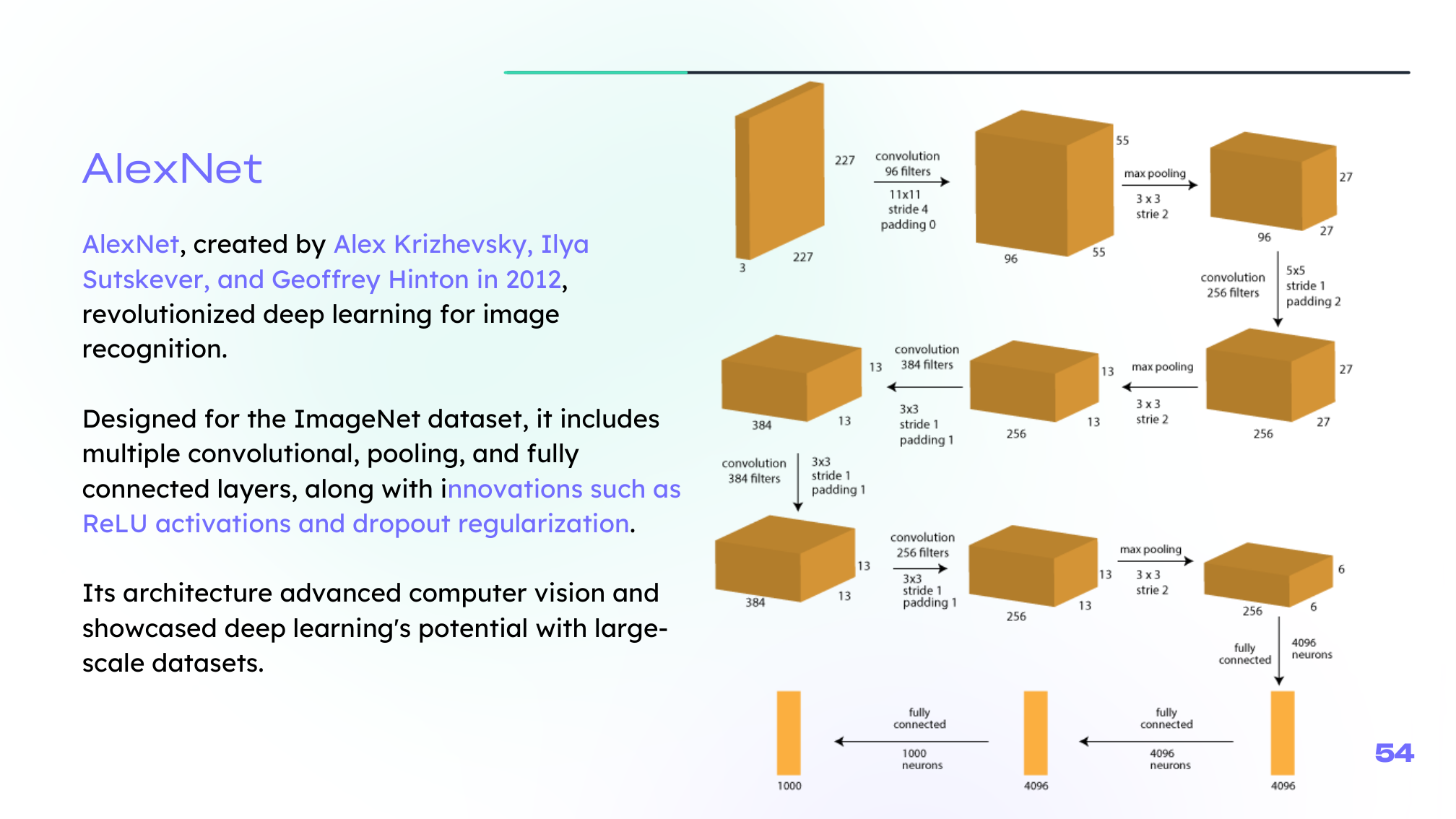

AlexNet

AlexNet marked a major turning point in 2012 when it won the ImageNet competition by a large margin.

Its impact came from combining several ideas effectively:

- deeper convolutional stacks,

- ReLU activations,

- dropout regularization,

- large-scale data,

- and GPU-based training.

AlexNet did not invent every component it used, but it showed that the combination could outperform older computer-vision pipelines decisively.

Main Takeaway

LeNet and AlexNet illustrate a broader lesson:

architecture progress usually comes from combining sound building blocks with enough data, compute, and training discipline.

That same pattern continues in later deep-learning families far beyond CNNs.

Summary

In this lesson we covered:

- Why classic architectures matter for understanding modern CNN design

- The role of LeNet in establishing practical CNN structure

- The role of AlexNet in the deep-learning breakthrough era

- The architectural ideas that made AlexNet influential

- The broader lesson linking architecture, optimization, and scale

This completes the current deep-learning section based on the source material in your DEEPLEARNING repo. When you want, we can extend it next with sequence models, attention, or generative models using the next source you prefer.