Gaussian Mixtures and the EM Algorithm

From Hard Clusters to Soft Clusters

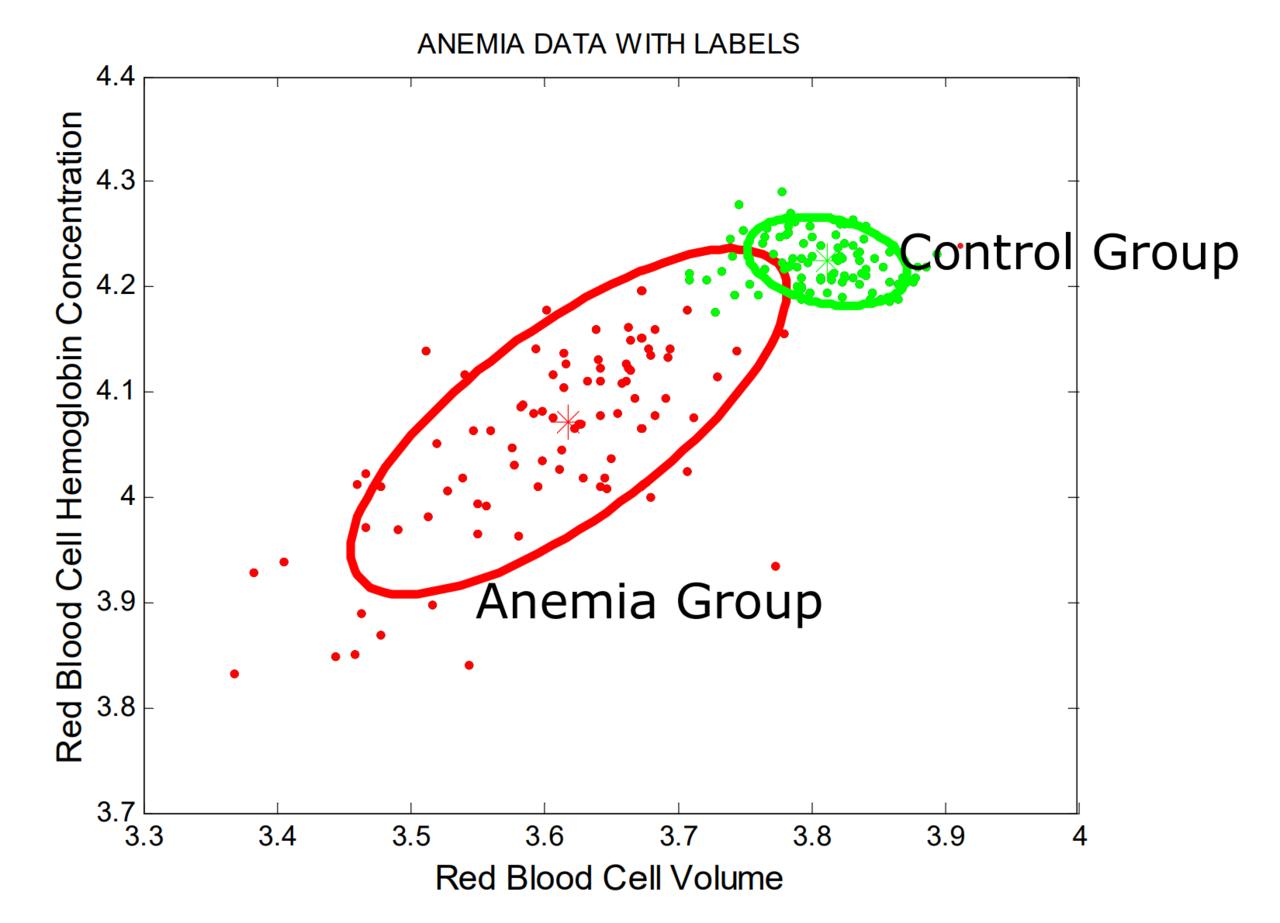

K-means assigns each point to exactly one cluster. Gaussian mixture models (GMMs) take a softer view: a point can belong to several components with different probabilities.

A GMM assumes that the data distribution is a mixture of \(K\) Gaussian components:

\[ p(x) = \sum_{j=1}^{K} \tau_j \mathcal{N}(x; \mu_j, \Sigma_j), \]where:

- \(\tau_j\) is the weight of component \(j\),

- \(\mu_j\) is its mean,

- \(\Sigma_j\) is its covariance matrix.

The EM Algorithm

Because the component responsible for each observation is unknown, GMMs are usually fit with the Expectation-Maximization (EM) algorithm.

E-step

Compute the responsibility of each component for each point:

\[ T_{j,i} = P(Z_i = j \mid X_i = x_i). \]This gives a soft assignment rather than a hard label.

M-step

Update the component weights, means, and covariances using those responsibilities as weights.

The two steps alternate until the likelihood stops improving meaningfully.

Selecting the Number of Components

Because GMMs are probabilistic models, criteria such as AIC and BIC are natural tools for choosing \(K\).

In general:

- lower AIC or BIC means a better trade-off between fit and complexity,

- BIC usually penalizes complexity more heavily than AIC.

When Gaussian Mixtures Are Useful

GMMs are a strong choice when:

- clusters are not well represented by simple Voronoi regions,

- soft membership is meaningful,

- or cluster covariances differ across groups.

Their main drawbacks are:

- sensitivity to initialization,

- convergence to local optima,

- and the need to choose the number of components.

Summary

In this lesson we covered:

- Why Gaussian mixtures provide soft cluster membership

- The mixture-model form of a GMM

- The logic of the EM algorithm

- How responsibilities differ from hard assignments

- Why AIC and BIC help choose the number of components

- When GMMs are more flexible than K-means

Next: We will finish with density-based methods that can detect irregular shapes and outliers.